For instance, for Portuguese I had to track the explanations for the tags in the Portuguese UD Bosque Corpus used to train the model. To finish up, if you are working with other languages, for a full explanation of the tags, you are going to have to look for them in the sources listed in the models page for your model of interest.

If you are working with other languages, token.pos_ values are always those of Universal dependencies and will work regardless. If you are working with English or German text, you're in luck! You can use spacy.explain() or access its glossary on github for the full list. If you don't customize it, it will be default TAG_MAP for that language.Īs of the writing of this answer, spacy.io/models lists all of the pre trained models and their labeling scheme. In other words, they are language model specific and they are defined in the TAG_MAP, which is customizable and trainable. With language here, I don't mean English or Portuguese, I mean 'en_core_web_sm' or 'pt_core_news_sm'. E.g., you can render the POS tags and syntactic dependencies as follows with style 'dep' from spacy import displacynlp spacy. I’ll explain how you can improve and extend Spacy’s Named Entity Recognition or NER in another tutorial.Available values for token.tag_ are language specific.

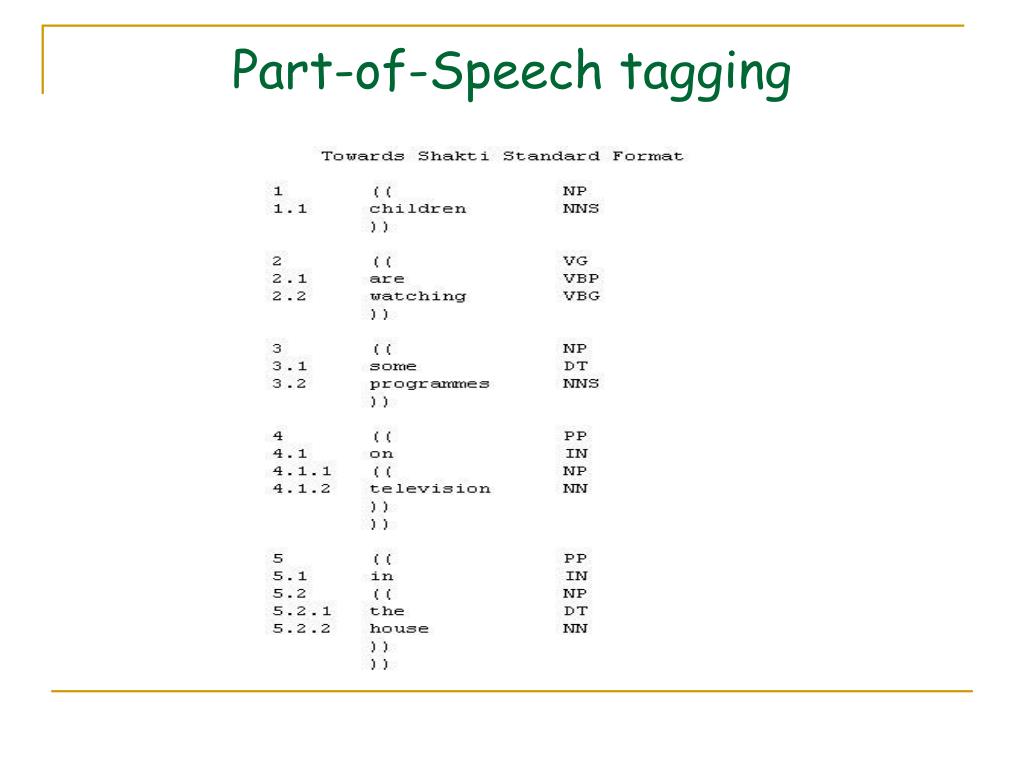

The Ginza model based on spaCy, released by Megagon Labs, is performing extremely well in Japanese ( see the project here ). As you can see, it doesn’t always detect entities correctly when they’re a bit obscure like the ones in our text sentence. SpaCy is an excellent Natural Language Processing framework that performs fast and accurate Part-Of-Speech tagging and dependency parsing in many languages (see more here). In the example below, it picks out Apple, Spacy, and NLP as ORG entities or organisations, Python as a GPE or geopolitical entity, and 5 as a CARDINAL or number. The ent style in Displacy labels any entities identified. The Displacy visualizer works inside a Jupyter notebook and takes the Spacy document and a style option and visualisation showing the tagged text. Stopwords rarely add much to models so often get stripped out to make models quicker and more effective.Īnother neat thing you can do with Spacy is use the additional Displacy module to visualise POS tagging. You can also see things like the shape of the word (how many characters it has and what case was used), and whether the word is a commonly used stop word, such as “is”, “with”, or “in”. They’re usually used in conjunction with token.tag_, which provides some deeper information. To answer the second part of your question, you can add special tokenisation rules to the tokeniser, as stated in the docs here. These include Parts of Speech or POS tags, stored in token.pos_, which contain a value such as NUM or NOUN to indicate what Spacy detected. 1 Answer Sorted by: 6 EDIT: this solution used spaCy 2.0.12 (IIRC). The code below will extract some of the most widely used Spacy token attributes and put them in a Pandas dataframe. The Danish UD treebank uses 17 universal part of speech tags. Parts of speech are also known as word classes, which describe the role of the words in sentences relatively to their neighbors (such as verbs and nouns). However, there are a wide range of other token attributes you can also extract with Spacy. Part-of-speech tagging is the task of classifying words into their part-of-speech, based on both their definition and context. We’ve already seen that the token returned by Spacy contains the text, such as the word, number, or punctuation, within the token.text element. To install this you need to execute a command line command !python3 -m spacy download en_core_web_sm and wait a couple of minutes for everything to install.Īpple is seeking 5 new data scientists with skills in Python, Pandas, and Spacy. The most commonly used one is en_core_web_sm, but other more accurate models are available.

Once this is installed, you’ll need to download a Spacy model. To get started, open a Jupyter notebook and install the Spacy package via the Pip Python package management system using !pip3 install spacy. We’ll tokenize the words in a sentence, tokenize the sentences in a paragraph, use lemmatization, detect stopwords, and extract parts of speech and their tags to a Pandas dataframe. In this simple tutorial, we’ll use Spacy for Parts of Speech tagging (or POS tagging), and NLP text preprocessing. It supports all common tasks out of the box, and is also highly extensible. Alongside the Natural Language Toolkit (NLTK), Spacy provides a huge range of functionality for a wide variety of NLP tasks. Spacy is one of the most popular Python packages for Natural Language Processing.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed